Spark Architecture 系统架构

发布时间 - 2025-06-27 00:00:00 点击率:次let's delve into the apache spark architecture, providing a high-level overview and discussing some key software components in detail.

High-Level Overview Apache Spark's application architecture is composed of several crucial components that work together to process data in a distributed environment. Understanding these components is essential for grasping how Spark functions. The key components include:

- Driver Program

- Master Node

- Worker Node

- Executor

- Tasks

- SparkContext

- SQL Context

- Spark Session

Here's an overview of how these components integrate within the overall architecture:

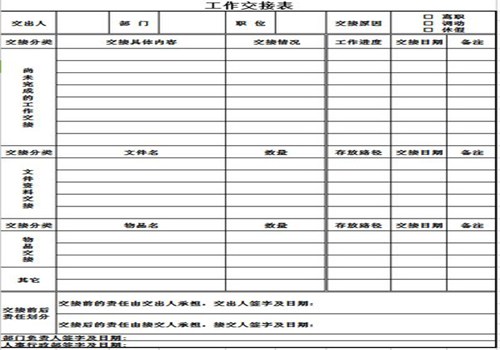

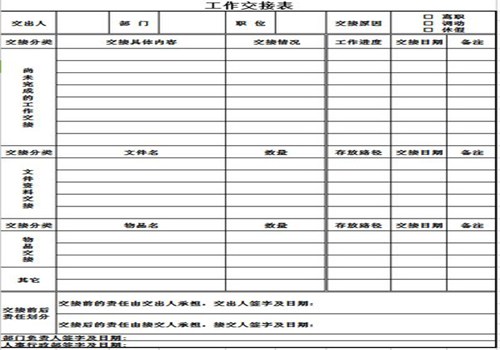

Apache Spark application architecture - Standalone mode

Driver Program The Driver Program serves as the primary component of a Spark application. The machine hosting the Spark application process, which initializes SparkContext and Spark Session, is referred to as the Driver Node, and the running process is known as the Driver Process. This program interacts with the Cluster Manager to allocate tasks to executors.

Cluster Manager As the name suggests, a Cluster Manager oversees a cluster. Spark is compatible with various cluster managers such as YARN, Mesos, and a Standalone cluster manager. In a standalone setup, there are two continuously running daemons: one on the master node and one on each worker node. Further details on cluster managers and deployment models will be covered in Chapter 8, Operating in Clustered Mode.

Worker If you're familiar with Hadoop, you'll recognize that a Worker Node is akin to a slave node. These nodes are where the actual computational work occurs within Spark executors. They report their available resources back to the master node. Typically, each node in a Spark cluster, except the master, runs a worker process. Usually, one Spark worker daemon is initiated per worker node, which then launches and oversees executors for the applications.

Executors The master node allocates resources and utilizes workers across the cluster to instantiate Executors for the driver. These executors are employed by the driver to execute tasks. Executors are initiated only when a job begins on a worker node. Each application maintains its own set of executor processes, which can remain active throughout the application's lifecycle and execute tasks across multiple threads. This approach ensures application isolation and prevents data sharing between different applications. Executors are responsible for task execution and managing data in memory or on disk.

Tasks A task represents a unit of work dispatched to an executor. It is essentially a command sent from the Driver Program to an executor, serialized as a Function object. The executor deserializes this command (which is part of your previously loaded JAR) and executes it on a specific data partition.

A partition is a logical division of data spread across a Spark cluster. Spark typically reads data from a distributed storage system and partitions it to facilitate parallel processing across the cluster. For instance, when reading from HDFS, a partition is created for each HDFS partition. Partitions are crucial because Spark executes one task per partition. Consequently, the number of partitions is significant. Spark automatically sets the number of partitions unless manually specified, e.g., sc.parallelize(data, numPartitions).

SparkContext

SparkContext serves as the entry point for a Spark session. It connects you to the Spark cluster and enables the creation of RDDs, accumulators, and broadcast variables on that cluster. Ideally, only one SparkContext should be active per JVM. Therefore, you must call stop() on the active SparkContext before initiating a new one. In local mode, when starting a Python or Scala shell, a SparkContext object is automatically created, and the variable sc references this SparkContext object, allowing you to create RDDs from text files without explicitly initializing it.

/**

* Read a text file from HDFS, a local file system (available on all nodes), or any

* Hadoop-supported file system URI, and return it as an RDD of Strings.

* The text files must be encoded as UTF-8.

*

* @param path path to the text file on a supported file system

* @param minPartitions suggested minimum number of partitions for the resulting RDD

* @return RDD of lines of the text file

*/

def textFile(

path: String,

minPartitions: Int = defaultMinPartitions): RDD[String] = withScope {

assertNotStopped()

hadoopFile(path, classOf[TextInputFormat], classOf[LongWritable], classOf[Text],

minPartitions).map(pair => pair._2.toString).setName(path)

}

/** Get an RDD for a Hadoop file with an arbitrary InputFormat

- @note Because Hadoop's RecordReader class re-uses the same Writable object for each

- record, directly caching the returned RDD or directly passing it to an aggregation or shuffle

- operation will create many references to the same object.

- If you plan to directly cache, sort, or aggregate Hadoop writable objects, you should first

- copy them using a

map function. - @param path directory to the input data files, the path can be comma separated paths

- as a list of inputs

- @param inputFormatClass storage format of the data to be read

- @param keyClass

Classof the key associated with theinputFormatClassparameter - @param valueClass

Classof the value associated with theinputFormatClassparameter - @param minPartitions suggested minimum number of partitions for the resulting RDD

- @return RDD of tuples of key and corresponding value

*/

def hadoopFile[K, V](

path: String,

inputFormatClass: Class[_ <: InputFormat[K, V]],

keyClass: Class[K],

valueClass: Class[V],

minPartitions: Int = defaultMinPartitions): RDD[(K, V)] = withScope {

assertNotStopped()

val confBroadcast = broadcast(new SerializableConfiguration(hadoopConfiguration))

val setInputPathsFunc = (jobConf: JobConf) => FileInputFormat.setInputPaths(jobConf, path)

new HadoopRDD(

this,

confBroadcast,

Some(setInputPathsFun

c),

inputFormatClass,

keyClass,

valueClass,

minPartitions).setName(path)

}

c),

inputFormatClass,

keyClass,

valueClass,

minPartitions).setName(path)

}Spark Session The Spark Session is the entry point for programming with Spark using the dataset and DataFrame API.

For more in-depth information, you can refer to the following resource: Apache Spark Architecture.

相关栏目: 【 网站优化151355 】 【 网络推广146373 】 【 网络技术251813 】 【 AI营销90571 】

相关推荐: 香港服务器建站指南:外贸独立站搭建与跨境电商配置流程 如何安全更换建站之星模板并保留数据? 韩国代理服务器如何选?解析IP设置技巧与跨境访问优化指南 Python函数文档自动校验_规范解析【教程】 Laravel的辅助函数有哪些_Laravel常用Helpers函数提高开发效率 Laravel如何实现API版本控制_Laravel API版本化路由设计策略 微信h5制作网站有哪些,免费微信H5页面制作工具? 微信小程序 五星评分(包括半颗星评分)实例代码 电商网站制作多少钱一个,电子商务公司的网站制作费用计入什么科目? 详解一款开源免费的.NET文档操作组件DocX(.NET组件介绍之一) Laravel如何实现URL美化Slug功能_Laravel使用eloquent-sluggable生成别名【方法】 javascript中对象的定义、使用以及对象和原型链操作小结 如何在云主机快速搭建网站站点? php 三元运算符实例详细介绍 Laravel如何清理系统缓存命令_Laravel清除路由配置及视图缓存的方法【总结】 Laravel如何处理JSON字段的查询和更新_Laravel JSON列操作与查询技巧 js实现点击每个li节点,都弹出其文本值及修改 Laravel 419 page expired怎么解决_Laravel CSRF令牌过期处理 Laravel如何使用Scope本地作用域_Laravel模型常用查询逻辑封装技巧【手册】 Laravel请求验证怎么写_Laravel Validator自定义表单验证规则教程 太平洋网站制作公司,网络用语太平洋是什么意思? 详解Android中Activity的四大启动模式实验简述 如何在阿里云域名上完成建站全流程? Laravel如何配置和使用队列处理异步任务_Laravel队列驱动与任务分发实例 phpredis提高消息队列的实时性方法(推荐) 如何在服务器上配置二级域名建站? 如何快速上传建站程序避免常见错误? Laravel Session怎么存储_Laravel Session驱动配置详解 Laravel怎么为数据库表字段添加索引以优化查询 高端建站如何打造兼具美学与转化的品牌官网? 网站广告牌制作方法,街上的广告牌,横幅,用PS还是其他软件做的? Swift中swift中的switch 语句 深圳防火门网站制作公司,深圳中天明防火门怎么编码? 如何快速配置高效服务器建站软件? 浅谈redis在项目中的应用 如何用花生壳三步快速搭建专属网站? Laravel如何实现模型的全局作用域?(Global Scope示例) Win11怎么关闭透明效果_Windows11辅助功能视觉效果设置 用v-html解决Vue.js渲染中html标签不被解析的问题 如何在万网利用已有域名快速建站? 如何用5美元大硬盘VPS安全高效搭建个人网站? Laravel如何处理CORS跨域请求?(配置示例) PHP 实现电台节目表的智能时间匹配与今日/明日轮播逻辑 如何构建满足综合性能需求的优质建站方案? 网站制作软件免费下载安装,有哪些免费下载的软件网站? Windows11怎样设置电源计划_Windows11电源计划调整攻略【指南】 Windows10如何删除恢复分区_Win10 Diskpart命令强制删除分区 SQL查询语句优化的实用方法总结 Laravel Artisan命令怎么自定义_创建自己的Laravel命令行工具完全指南 如何用AI一键生成爆款短视频文案?小红书AI文案写作指令【教程】

c),

inputFormatClass,

keyClass,

valueClass,

minPartitions).setName(path)

}

c),

inputFormatClass,

keyClass,

valueClass,

minPartitions).setName(path)

}